Back in 2006, when the hype around the then-called Web 2.0 “thing” was reaching its peak of inflated expectations, much was said about an article published by Nature the year before, which boldly stated:

“Jimmy Wales’ Wikipedia comes close to Britannica in terms of the accuracy of its science entries, a Nature investigation finds. “

Many organizations then started placing high hopes that all their knowledge management woes would have found their savior. If Wikipedia can be as good as Britannica, we could replace all our outdated corporate knowledge repositories with wikis. The wisdom of crowds would run its magic, and we’d have high quality and up-to-date content we can rely upon.

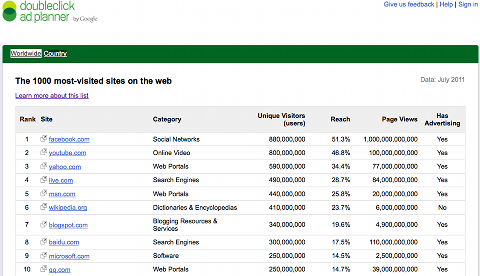

Six years later, we can safely say that the predicted wiki utopia never materialized. Was that just a collective hallucination caused by too many consultants who drank a lot of the Kool-Aid 2.0? How come that Wikipedia is even more successful today than back then (6th most accessed site worldwide), but a good chunk of our corporate wikis are moribund and abandoned, with content that’s often worse than the ones created by our decades old, expert-based, top-down traditional processes? Was Wikipedia just the exception that proved the rule? Is the Britannica model really outdated? Keep reading, and you may find that the actual answer is a bit of the above, but with a significant twist.

Before showing any numbers to show the success of Wikipedia, just take a look at Wikistream, an impressive visualization of what’s happening in Wikipedia right now. Here’s a snapshot as a teaser, but I highly recommend you to click in the link above for the real deal:

As you can see, at any given moment there is an incredible number of editors frantically updating Wikipedia. Thus, it’s no wonder that enough of interesting and high-quality content is produced to convince more than 400 million unique people to visit it every month. Here’s Google’s estimate on the number of monthly visitors for the top sites on the web (excluding themselves):

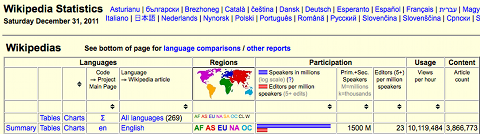

Now, take a look at this second stat: in the English version of Wikipedia, you have approximately 23 editors (generously defined as people with 5 or more contributions) per million speakers (1.5 billion, counting the ones with English as a second language). If I did the math right, that means approximately 35,000 editors who contributed to almost 4 million articles there.

In other words, for a potential audience of 1.5 billion people in the world who can read Wikipedia’s content in English, about 0.0023% are editors. Limit the total target population to English-speaking visitors visitors only — say, for argument’s sake, that only 100 million from the 400+ million mentioned above qualify — and you still end up with 0.035% of the overall readers being editors. Note that it is not 3.5%, it’s 100 times less than that.

Suppose now that you work for a really large corporation, with 100,000 employees, and deploy wikis to your workforce to create the content you need. If the relative ratio of readers vs. editors observed in Wikipedia holds in a corporate context, you would end up with 35 people who would be wiki editors. If you could create a Wikistream of them editing your wikis, you would likely not see any activity for weeks — as opposed to the tens of changes you see every second in Wikipedia.

Of course, the ratio is likely to be different in the workplace, but the point here is that, just because Wikipedia works great, there is no guarantee that the wiki in your organization will achieve the same success. On the contrary: it’s very likely that most wikis in your organization will have only 1 or 2 regular contributors – the person who created the wiki and one or other odd contributor who happens to be “wiki-prone”. The honorable exceptions are likely to be those wikis used by your power users, those who would try almost anything thrown their way.

Still, there is a success story in the Wikipedia vs. Britannica battle that can’t be ignored. See this chart comparing the two back in 2006:

The lesson told by this chart that IS applicable to organizations today has 2 aspects: critical mass and the value of the “networked knowledge”. The first factor is easier to understand. If the whole world is your oyster, 0.035% actually corresponds to a lot of people, and thousands of editors CAN create high-quality content. But in a smaller population, be it your project team, your department, or your whole organization, often there is no wisdom of crowds, but only the wisdom of a handful. You need to have mechanisms in place that enable more eyeballs and more willingness to participate than would normally occur without any intervention. That’s easier said than done. What is the missing secret sauce?

Command-and-control editorial hurdles and the reliance on experts seem more effective in corporations than a wiki approach because the information flows that could unleash the value of wikis are usually limited by low-bandwidth, low reach channels: mostly person-to-person interactions, meetings, telephone, and emails. However, the social technologies in the workplace evolved considerably over the last 5 years to enable all those conversations to scale up in volume and reach. Social business platforms, with status updates, microblogging, bookmark/like/share/rate/comment buttons, communities and sub-communities, are going beyond the social networking of people and ultimately enabling knowledge itself to be networked.

It’s essentially a “Web 2.0, meet your successor” shift. As corporations start more effectively using those tools, the vision articulated by David Weinberg (of the “Cluetrain Manifesto” and “Everything Is Miscellaneous” fame) in his recent book “Too Big To Know” is starting to get realized:

“As knowledge becomes networked, the smartest person in the room is the room itself: the network that joins the people and ideas in the room, and connects to those outside of it.”

In isolation, an entry in your corporate wiki may never become as good as a popular Wikipedia article, but once you have that “knowledge” networked with the people who wrote it, those who consumed it, their other activities, their membership to various communities, then you really start unleashing the value of it. It’s not only the content, it’s not only the expert, it’s not only the crowd in the room, it’s not only the room. It’s the web of all the above connected to all the other rooms, crowds, experts and content. Nova Spivack presciently alluded to that in his blog post depicting “The Metaweb”.

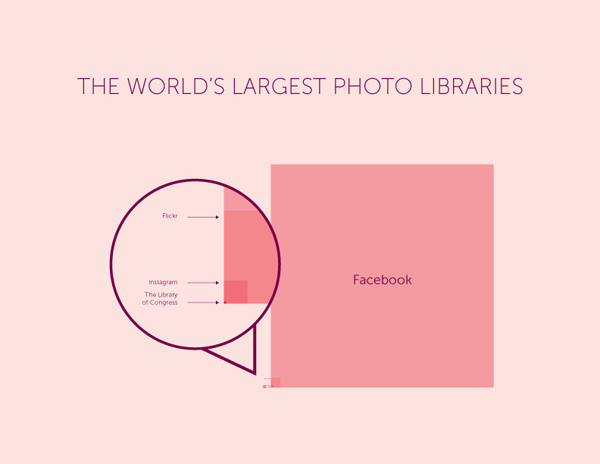

A good way to visualize the power of networked knowledge is through this graphic (by 1000memories, via Bernie Michalik):

In 2006, Flickr looked all the rage, the best way to share pictures with others and to keep a repository of our own photo memories. A few years later, Facebook clearly trumped it. Even though FB is not a specialized photo sharing website, the fact that you can do that too — and several other things — in the same networked platform, created the conditions to explode its photo sharing potential and overtake Flickr as the photo repository of choice for the majority of people over the Internet. The picture above can be seen as a proxy representation of conventional media (the Library of Congress), the Web 2.0 way (in this case, Flickr, of course) and the whatever-comes-next-and-has-no-name-yet (in the big Facebook square). This is not to say that Facebook is a successor of Web 2.0, but it’s showing us a glimpse of the future.

Just to close this post: the limited success of wikis in the workplace should not be an incentive to go back to our old ways. It’s only an indication that we are still taking baby steps towards a better understanding of how networks of people and networks of content can enable us to more efficiently manage knowledge.